|

}While($Query. $Query.ListItemCollectionPosition = $ListItems.ListItemCollectionPosition #Update Postion of the ListItemCollectionPosition $Data | Add-Member -MemberType NoteProperty -Name "FileSize" -value $File.Length $Data | Add-Member -MemberType NoteProperty -Name "URL" -value $File.ServerRelativeUrl $Data | Add-Member -MemberType NoteProperty -Name "HashCode" -value $HashCode $Data | Add-Member -MemberType NoteProperty -name "FileName" -value $File.Name $HashCode = ::ToString($MD5.ComputeHash($Bytes.Value)) $MD5 = New-Object -TypeName 5CryptoServiceProvider Write-Progress -PercentComplete ($Counter / $List.ItemCount * 100) -Activity "Processing File $Counter of $($List.ItemCount) in $($List.Title) of $($Web.URL)" -Status "Scanning File '$($File.Name)'" If($Item.FileSystemObjectType -eq "File") #Define CAML Query to get Files from the list in batches If($List.BaseType -eq "DocumentLibrary" -and $List.Hidden -eq $False -and $List.ItemCount -gt 0 -and $List.Title -Notin("Site Pages","Style Library", "Preservation Hold Library")) $Ctx = $AuthenticationManager.GetWebLoginClientContext($SiteURL)

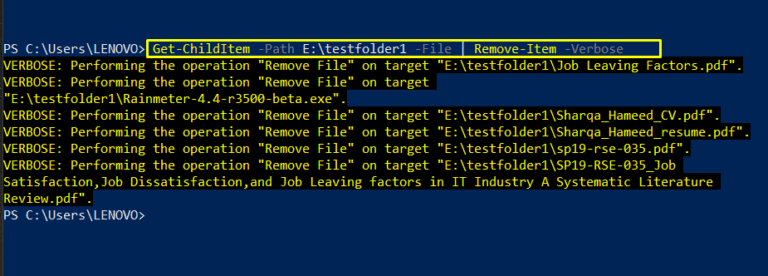

Write-Host "Title: " $ -ForegroundColor Green From there, use PowerShell again and delete the items (. I would use PowerShell to iterate and dump the entire list to a CSV, handle your duplications in Excel and then come out with a list of IDs to delete (the duplicates). ::LoadFrom("C:\Program Files\WindowsPowerShell\Modules\SharePointPnPPowerShellOnline\.0\") I would lean towards a PowerShell solution, ideally on a server in the farm that is NOT highly utilized. #Add required references to OfficeDevPnP.Core and SharePoint client assembly Here's my Powershell thus far - thanks in advance to anyone who can point me in the right direction! We use MFA so I've tweaked this script to the point where it can authenticate properly, but fails with the following error:Įrror: Cannot convert argument "query", with value: "", for "GetItems" to type "Microsoft.ShareP ": "Cannot convert the "" value of type " ery" to type ""." I'm working on a script to search our overcrowded Sharepoint Online tenant for duplicate files and I'm having trouble getting this script off the ground. If there are any questions if a link would be considered spam, please use modmail prior to posting. You must be an employee of the company hiring for the position.īlog spam must be accompanied by a real discussion. If you want to recruit, please contact the moderators first. We all use 3rd party products and even Microsoft Partners at times - the moderators have no issues with recommendations in a thread where you believe the original poster could benefit.

Of course, it is possible for two files to have the same hash and not the same content so if you are really paranoid you might want to check something else in addition to the MD5 hash.No selling software or business services.

Note that two of the files have the same hash, as expected since they have the same content. Anyway, keep this function around, we’ll use it along with AddNotes and group-object to write a simple script that can search directories and tell us all the files that are duplicates. Hopefully nothing will go wrong with the function but if anything does I want to be sure to close any open streams. It’ll get called if any exception is thrown, otherwise its just ignored. I think about the only new thing here is the trap statement. # We have to be sure that we close the file stream if any exceptions are thrown. $hashByteArray = $hashAlgorithm.ComputeHash($stream) $hashAlgorithm = new-object $cryptoServiceProvider # Calculates the hash of a file and returns it as a string.įunction Get-MD5( $file = $(throw ‘Usage: Get-MD5 ’)) Need a way to check if two files are the same? Calculate a hash of the files.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed